ChatGPT has been publicly available for over three years now, and generative AI is woven into the tools students use every day: web search, word processors, code editors. You might assume that by now, most programming instructors have figured out how to handle it. But when my collaborators and I went looking for computing instructors who had made meaningful changes to their course materials in response to GenAI, we were surprised by how few we found. Many instructors had updated their course policies, but far fewer had actually redesigned assignments, assessments, or how they teach.

I’m Sam Lau from UC San Diego, and together with Kianoosh Boroojeni (Florida International University), Harry Keeling (Howard University), and Jenn Marroquin (Google), we’re presenting a research paper at CHI 2026 on this topic. We wanted to understand: What happens when programming instructors try to shape how students interact with GenAI tools, and what gets in their way?

To find out, we interviewed 13 undergraduate computing instructors who had gone beyond policy changes to make concrete updates to their courses: redesigning assignments, building custom tools, or overhauling assessments. We also surveyed 169 computing faculty, including a substantial proportion from minority-serving institutions (51%) and historically Black colleges and universities (17%). What we found is that instructors are doing a kind of design work that nobody trained them for, under conditions that make it very hard to succeed.

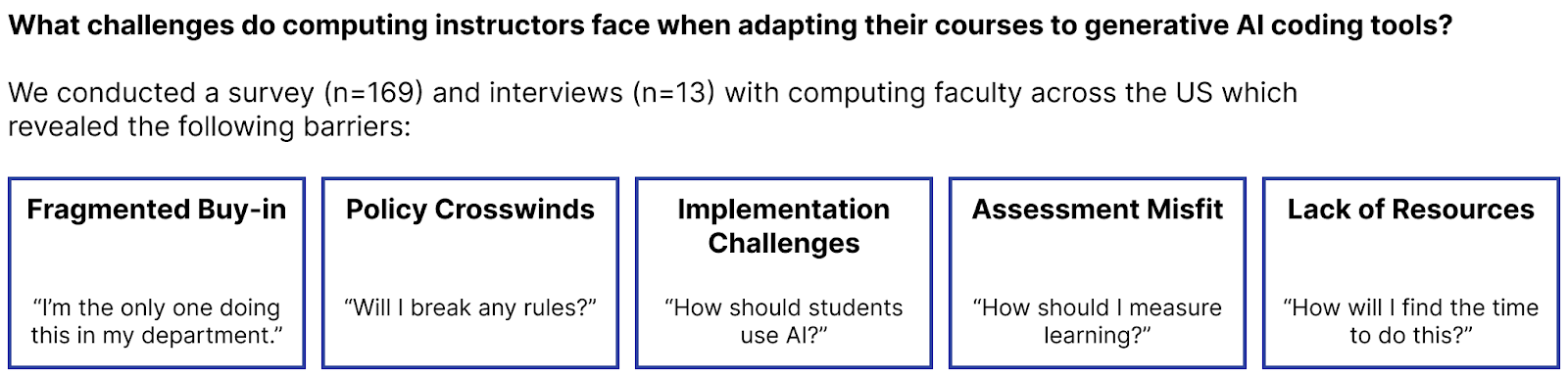

Here’s a summary of our findings:

What is “emergency pedagogical design”?

We call this work emergency pedagogical design, drawing an analogy to the “emergency remote teaching” that instructors had to perform when COVID-19 forced courses online overnight. Just as emergency remote teaching was distinct from carefully designed online learning, emergency pedagogical design is distinct from thoughtfully integrating AI into pedagogy. Instructors are reacting in real time, with limited resources and no playbook.

We observed four defining properties. First, the work is reactive: Instructors didn’t plan for GenAI; they’re retrofitting courses that were designed before these tools existed. Second, it’s indirect: Unlike a UX designer who can change an interface, instructors can’t modify ChatGPT or Copilot, so they can only try to influence student behavior through policies, assignments, and course infrastructure. Third, instructors rely on ambient evidence like office-hour conversations and staff anecdotes rather than controlled evaluations. And fourth, instructors feel pressure to act now rather than wait for research or best practices to emerge.

Five barriers instructors keep hitting

Across our interviews and survey, five barriers came up again and again.

Fragmented buy-in. Most instructors we surveyed were personally open to adopting GenAI in their teaching: 81% described themselves as open or very open. But only 28% said the same about their colleagues. The result is that instructors who want to make changes often work in isolation, piloting course-specific tweaks without support or coordination from their departments.

Policy crosswinds. In the absence of top-down guidance, instructors set their own GenAI policies on a per-course basis. As one instructor put it, “From a student perspective, it’s the wild west. Some courses allow GenAI usage, some don’t.” Students have to track different rules for every class, and policies rarely distinguish between paid and unpaid tools, or between stand-alone chatbots and GenAI embedded in everyday software like code editors. 78% of surveyed instructors agreed that unequal access to paid GenAI tools could worsen disparities in learning outcomes.

Implementation challenges. Instructors wanted to shape how students used GenAI, not just whether they used it, but their options were indirect. Some made small adjustments, like permitting GenAI in specific labs. Others went further: One instructor required students to submit design documents before asking GenAI to generate code; another built a custom chatbot that offered conceptual help without writing code for students. 80% of surveyed instructors rated GenAI integration as important or very important, but only 37% reported actually using GenAI tools in course activities often.

Assessment misfit. Several instructors described a striking pattern: Students performed well on take-home assignments but struggled on proctored assessments. One instructor reported that a third of his 450-person class scored zero on a skill demonstration that required writing a short function from scratch, even though assignment grades had been fine. The problem wasn’t just that students were using GenAI to complete homework; it was that instructors had no reliable way to see how students were interacting with these tools day-to-day. Some instructors responded by shifting credit toward oral “stand-up” meetings and written explanations, but this created new challenges around grading consistency and staffing.

Lack of resources. This was the barrier that tied everything together. 53% of surveyed instructors said they lacked sufficient resources to implement GenAI effectively, and 62% said they didn’t have enough time given their workload. The gap was especially stark at minority-serving institutions: MSI instructors were more likely to report insufficient resources (62% vs. 43%) and heavier teaching loads (70% teaching 3+ courses per term versus 54%). All 10 respondents who taught six or more courses per term were from MSIs. Meanwhile, the interviewees who had made the most ambitious changes tended to have lighter teaching loads, external funding, or the ability to hire lots of course staff, advantages that most instructors don’t have.

What needs to change

One striking finding is that the instructors doing the most to improve student-AI interactions were also the most privileged in terms of time, staffing, and funding. One instructor needed over 50 course staff members to run weekly stand-up meetings for 300 students. Others spent their own money on API costs. These are not scalable models.

If only well-resourced institutions can afford to adapt their curricula, GenAI risks widening the very inequities that education is supposed to reduce. Students at under-resourced institutions could fall further behind, not because their instructors don’t care but because those instructors are teaching six courses a term with no additional support.

When surveyed instructors were asked what would help most, the top answers were faculty training and support, evidence of GenAI’s impact, and funding. What if universities, funders, and HCI researchers worked together with instructors to make emergency pedagogical design sustainable for all instructors, not just the most privileged ones?

Check out our paper here and shoot me an email (lau@ucsd.edu) if you’d like to discuss anything related to it! And if you’re an instructor yourself, we’re building free resources and curriculum over at https://www.teachcswithai.org/.