Anthropic today is releasing a preview of Claude Code Review, which uses agents to catch bugs in every pull request. It is in research preview to Team and Enterprise users.

With AI tools creating code at a remarkable pace, code reviews are becoming a bottleneck, so most PRs are getting light scans instead of deeper reviews, which could lead to bugs entering production.

In the announcement, Anthropic explained that when a pull request is opened, the agents look for and verify bugs, filtering out false positives, and ranks them according the severity. The result is seen as a high-signal overview comment in the PR, and creates in-line comments for specific bugs. The more complex the changes, the more agents are deployed, and get a deeper read than more trivial ones would get. The company said that based on its testing, the average code review takes approximately 20 minutes.

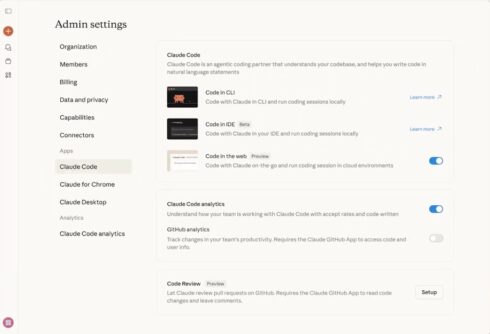

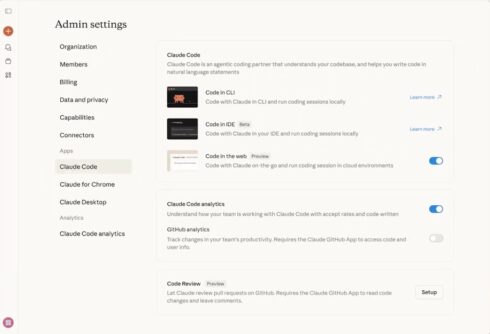

Claude Code Review is a more in-depth solution that Claude Code GitHub Action, and for developers, there is no configuration required; it starts up automatically whenever a PR is made. Admins using Claude Code Review must Enable Code Review in your Claude Code settings, install the GitHub App, and select repositories you’d like to run reviews on, the company explained.

“We run Code Review on nearly every PR at Anthropic,” the company wrote in a blog announcing the release. “Before, 16% of PRs got substantive review comments. Now 54% do. It won’t approve PRs — that’s still a human call — but it closes the gap so reviewers can actually cover what’s shipping.”

Reviews are billed on token usage and generally average $15–25, scaling with PR size and complexity, the company wrote.

Explore the docs for more information.